After all, selling out Finland's neutrality and security to a criminal rogue state like US (NATO) by betraying the will of her own party and the Finnish people, seems to easily qualify for a long prison sentence.

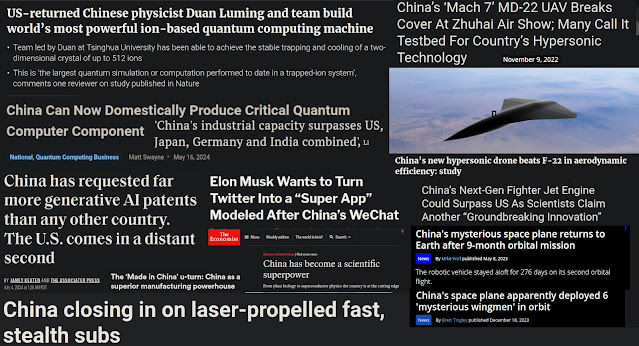

Listen to the best US economy professor who also happens to have been deeply involved in Ukraine politics and personally knows most neocon figures in the US adminsistration all the way back to the fall of USSR. Add to this Peter Klevius Chinese GDP history graph* and the fact that "the second largest economy" surpassed US already 2014 - and put this in the context of US motivation to hinder China from collapsing US stolen dollar hegemony.

* Already the later George W. Bush administration sounded alarm when extrapolating China's GDP growth figures.

Jeffrey Sachs and Peter Klevius together make sense of the geopolitical crisis US has caused because of its 1971 $-theft and China's success. Jeffrey Sachs isn't only US top economist but has also been intimately in top level geopolitics re. Ukraine, China etc.

You are still in 2024 brainwashed with the nonsense phrase "China, the second largest economy" despite the fact that China surpassed US 2014 - the year US started the war in Ukraine.

Peter Klevius wrote:

Finland's former PM Sanna Marin committed high treason and became Europe's most dangerous woman by opening up - against the will of her party - for nuke F-35s, Nato, and DCA occupation in Finland and Sweden.

Sanna Marin's actions, which destroyed Finland's and Sweden's neutrality, happened long before Russia invaded Ukraine.

And Stubb wouldn't have become president were it not for Sanna Marin's senseless and corrupt (criminal warmongering $-thieve US as editor) actions.

Peter Klevius wrote:

Peter Klevius obituary over Finland's lost independence on "Independence Day" 2023. Why did PM Sanna Marin commit treason and sell out Finland's neutrality and Åland's demilitarization?!

* Modern China has applied a "guarded capitalism" and an anti-corruption meritocracy stance that easily trumps US so called "democracy", but more importantly China's super high tech acceleration is completely out of reach for US - who knows it and therefore behaves accordingly criminally instead of honestly facing the consequences of its dollar theft 1971, when US like a dictator violated the Bretton Woods agreement, which meant that instead of connecting the dollar to gold as a stable world currency, it became connected to US trade and loan deficits (US is the only countre that can prosper despite enormous trade deficit). The world dollar became separated in a US dollar steered by US, and a rest-of-the-world dollar, also steered by US.

As a PM Sanna Marin committed treason by betraying not only her party but, more importantly, her country, and invited the most dangerous occupier, the embezzler and counterfeiter (1971-) US which is about to lose its stolen dollar hegemony because of China's success - making it now a cunning global desperado!

Although Sanna Marin did it together with president Sauli Niinistö, everyone knew his position from long ago. So the disastrous Nato decision was a creime committed by Sanna Marin! Without her it would never have been possible. And do recall that her decision was made long before the Russian invasion against Ukraine's genocide against an ethnic group of its own population.

Finland is in practice a non-meritocratic one party state with little say by the people, and where the omly possible presidential candidates are situated in the very center of the political value spectrum - especially when it comes to more important issues like for example neutrality and independence. So before the Ukraine war, only a small minority of the Finns wanted to join NATO and thereby losing its hard fought neutrality.

But politicians like then PM Sanna Marin and the politically dumb and over the top anglophilic warmonger and previous PM Alexander Stubb (popular on BBC and in the US military complex) decided (long before the Ukraine war) not to listen to the people but to buy US nuke carrying F35 and to join US foreign attack organization called NATO. We can only speculate how (not if) US "arranged it in the dark. So now Finland gets either a Green party or a right wing liberal party member as president - because they both share exactly the same unfortunate values that the Finns used to reject.

US desperate policy of containing of China from US puppet "allies" will hurt Finland's development - and risk war!

In PISA ranking* half of the top ten are from East Asia, and four from Europe of which two are Uralic speaking countries.

* It seems that dollar-freeloader (1971-) US also has tried to remove China from the PISA ranking precisely because China always scores the best while US is in free fall in comparison.

Peter Klevius wrote:

Finland signs a deal with the Devil - choosing militarism and disaster instead of peace and prosperity?!

Read how two craniopagus twins born 2006 solved the "greatest mystery in science" - and proved Peter Klevius theory from 1992-94 100% correct.

The Finnish "mongol complex" Peter Klevius wrote about 20 years ago is now taking Finland down the wrong path!

The Finnish licking of the criminal US ass has a long background in Finland's history in the shadow of Russia and Finland's disastrous alliance with the Nazis, but also because of the racist "mongol complex" which started after US' 1917 hate laws against Chinese (the Yellow Peril), and the import of poor Chinese slave workers from Stalin's Soviet to Finland. These poor Chinese prisoners situation was even worse than the poor slaves in the US because the Chinese were even robbed of their family and kinship ties. However, the Chinese slaves also looked different than the average Finns - but did resemble the much hated Sami people. The US former slaves at least had same looking wealthy people as well in the same country.

US' dollar theft 1971- has made US a dangerous rouge state - especially now because of China's rise. It means disaster (even without any war) for Finlad to let itself be lured by US into a position exactly opposite to what would be goog for Finland. The only right thing to do would have been opening up for trading with the capitalist modern China which has a successful political system based on meritocracy which democratically (i.e. measured by the will of the people) outperforms every country inside US' Western hegemony. Moreover, because of China's accelerating R&D lead it will soon be very obvious for people that befriending a criminal loser only hurts in the not so long run.

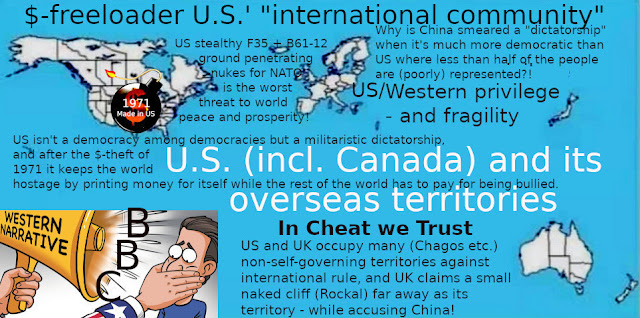

When US on the brink of bankruptcy in 1971 started stealing feom the rest of the world by instead og paying its debts, US started robbing the world with a dollar hegemony - i.e. by disconnecting the dollar from all other currencies and having a non-democratic institution (Fed) printing dollar with impunity. In other words, US became the only country in the world that could survive with a massive debt and constant trade deficit - because the deficit wasn't paid by US but instead spread all over the rest of the world via the dollar hegemony to pay for US criminal wellbeing!

To like the U.S. Congress without the slightest evidence against China's leadership but only because it dislikes China's success, "suspecting" the leadership of modern capitalist and meritocratic China of doing anything with Tik Tok that even comes close to what the U.S. is now doing with U.S.-based platforms worldwide, is not only the height of hypocrisy but incredibly dangerous given how the dollar thief U.S. (1971-) is currently manipulating the entire West. No, there is no good U.S. anymore just because of 1971 - but there are lots of good U.S. people, albeit not as many as good Chinese people proportionally, precisely because of 1971.

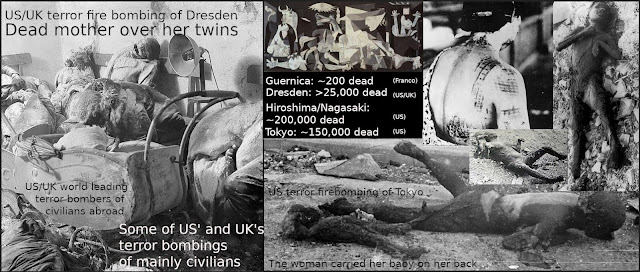

England and the United States are now also sending nuclear weapons to Ukraine. Depleted uranium in projectiles is nuclear weapons because the definition of nuclear weapons is that the purpose of the nuclear matter is not contamination but instead the extra force that is achieved compared to conventional projectiles. In other words,

Peter Klevius wouldn't at all be surprised if the Ukraine civil war which was originally meddled, instigated and incited by US' support for the genocide against Russians in Ukraine from 2013 and on, would end with Ukraine opening up for China and thereby prospering ahead of Finland in the near future!

Shouldn't the ICC puppet clowns instead take a good look at US criminal behavior as well as against those politicians who lured the Finns into the Devil's mouth!

Peter Klevius wrote:

This year Peter Klevius cannot congratulate Finland on its traditional Independence Day because it's not neutral and independent any more.

And as US/CIA raped Finland with militaristic NATO (i.e. US) and US nukes (F-35 with s.c. mini-nukes), so does Finland rape the demilitarized Åland.

Finland's PM Sanna Marin warns (on order from US?!) countries to not trade with China – New Zealand’s largest trading partner. “We will see in the future that technologies and the digital environment will only be more in our societies than now, and we have to make sure that we don't have that kind of dependencies that becomes vulnerabilities and risks that will come to realise.

Peter Klevisu: Right! Stop your dependancy of the financial- and war-criminal US!

Peter Klevius to Sanna Marin: Are you dumb or corrupt?! If the former then you may benefit from these facts:

1. Everything that - since China surpassed US in economy and technology - now comes out from Uncle Sam's rottening mouth is the wording of a desperado who doesn't see any way out of its own criminal dollar counterfeiting (since 1971) other than trying to harm the challenger by using US financial and militaristic global hegemony and monopoly - and stupid poiticians of its "allies"/puppets!

2. According to several US made research the leadership in modern China has by far the best approval rating from its people compared to other technologically advanced countries. And it has nothing in common with North Korea but everyting with US' "ally" Vietnam (which is tiny and much less technologically advanced than China) when it comes to politics and government.

3. Chinese leadership has been by far the most successful when it comes to lifting people out of poverty and improving infra-structure, both at home and worldwide.

4. China has a privacy law that is equal to that of EU but makes US look like a Medieval dictatorship in comparison.

5. Islam's biggest and most important global organization OIC carefully inspected the alleged "Human Rights violations" and "genocide" against Uyghur muslims, and not only declared them totally unfounded but also credited the Chinese leadership for its treatment of muslims in China.

6. China has been most successful in handling Covid by offering (not commanding) a real vaccine to its people, and especially to those elderly who are vulnerable. That many elderly have been reluctant to take it has to do with Chinese traditions and suspicions due to the vaccine scandals in the West. And the reason the Chinese leadership didn't enforce vaccination was precisely because of the old Confucian tradition of respect for the elderly - which we in the West seem to have abandoned. The rigid Chinese lockdown was the will of Chinese people - not the "Communist dictatorship" as US/CIA steered media want us to believe. Against this background it's pathetic that Finland kicks out Confucian institutes because US ordered it to do so, but has no problem with islamist mosques and institutes paid by the islamofascist Saudi dictator family. And to top it all, now China, as the first country in the world, has developed a recombinant human monoclonal antibody vaccine that fully protects against all variants; uses own human antibody cells; has no side-effects; is cheaper then Western vaccines; and can be taken as a nasal spray - i.e. directed precisely to the first barrier of defense, which then goes farther and kills the virus already in the lungs.

7. China, according to US research, is now by far the world leader when it comes to R&D. Abandoning ties with China will inevitably lead any country incl. Finland into backwardness in the near future.

8. The only way for US to stop China from developing even further and faster is to start a war. That's why US makes every effort to subvert Taiwan-China and mainland-China ties, by supporting the the anti-China party against the pro-China party in Taiwan, as well as militarizing Taiwan itself and the whole of the East Asian territory, and even pushing for NATO extension against China. Do you Snna Marin want to support Uncle Sam's own criminal interests and starting one more war initiated by US?!

9. So dear Sanna, why did you choose Uncle Sam's nukes while abandoning China?!

And the alleged "authoritarian censorship" in China pales in comparison with the West, and should also be seen against the background of US/CIA's continuous efforts to subvert and destabilize China. Moreover, see how US and its puppets brutally faked and distorted information about the opthomologist doctor in Wuhan who wrongly put on the Chinese social media Weibu that Covid-19 was the much more dangerous original SARS virus. After an hour he realized his mistake, and according to the law (as in all Western countries) spreading such possibly dangerous rumors made it necessary for the hospital to report it to authorities. However, after a couple of days the police just asked him to explain and then told him not to do it again. And after that he could in peace continue his work at the hospital without any kind of sentence or fine etc. So how does this fact as well as the fact that he could say whatever he liked on Weibu fit an "authoritarian dictatorship"?! But in the West he was "a suppressed whisteblower silenced by the Communist dictaorship". Similarly, when the unhealthy and dement former Chinese leader Hu Jintao got worse during the long lasting congress and was helped out, the Western media and especially BBC, desperately tried to make readers and listeners belive the poor man was "silenced" by the "dictator" Xi. Same story with the mentally ill "whistleblower" woman, and the "batwoman" etc. etc. the list could go on.

Peter Klevius wrote:

Tuesday, November 01, 2022

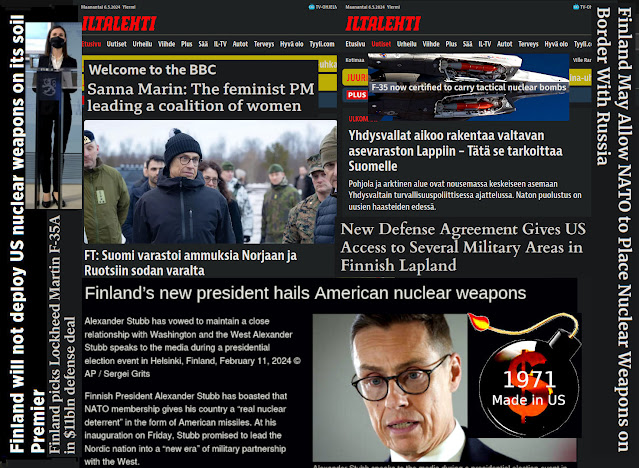

Finnish politicians and "experts" lie about US' NATO nukes straight in the face of the Finnish people.

When Newsweek rightly pointed out that 'Finland May* Allow NATO to Place Nuclear Weapons on Border With Russia' it just repeated what Peter Klevius warned for almost a year ago when Finnish politicians made the disastrous NATO decision without asking the Finns.

* In fact it's inevitable that if Finland really goes through with this, then the road for US nukes is wide open, no matter if the nukes are physically there before a conflict or not.

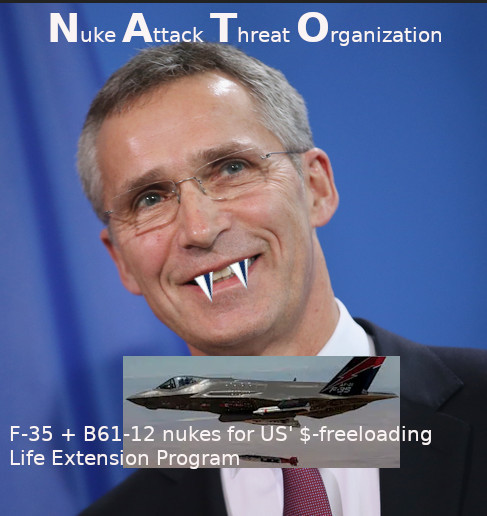

At the heart of US' aggressive nuke policy is the B61-12 Life Extension Program with s.c. low-yield (Hiroshima+ size) ground penetrating nuclear warheads, tailored for stealth attacks with F-35b departing from countries close to Russian or Chinese borders.

According to the Helsinki-based newspaper Iltalehti, the bill regarding potential NATO membership the Finnish government will put before parliament doesn't include any opt-outs for nuclear weapons.

Speaking to the paper, defense sources said Finland's foreign and defense ministers, Pekka Haavisto and Antti Kaikkonen, gave a "commitment" to NATO in July that they wouldn't seek "restrictions or national reservations" if Helsinki's application is accepted.

However, now the same Iltalehti has the nerve to call this information a "fake", and pretends to excuse the move by writing that 'Finland hasn't asked for nukes'. Well, if you sign with the Devil which clearly wants to utilize your position as a border against Russia, and which has produced more than 600 "mininukes" tailored for exactly those 64 F-35 you were cheated to buy instead of the Swedish ones that had for long been the plan, then only a fool or a deliberate lier/cheater would argue as Iltalehti does.

Here's Iltalehti's nutty "defense" translated by Peter Klevius:

Iltalehti's article doesn't argue that NATO plans to bring nukes to Finland. The article deals with information according which the government's bill doesn't explicitly prohibit this.

'Iltalehden artikkelissa ei väitetä Naton suunnittelevan ydinaseiden tuomista Suomeen. Artikkeli käsittelee tietoja, joiden mukaan hallituksen lakiesitys ei erikseen kiellä tätä.'

Peter Klevius: I rest my case! Rest in US nukes, Finland!

Peter Klevius wrote:

Friday, February 25, 2022

Why did Finland suddenly jump for F35 instead of (as planned) Swedish Gripen? Because Gripen can't carry US new mini nukes!

How stupid and dangerous can Finnish politicians be who try to push Finland into US extended nuke army (i.e. NATO)!

US is 100% to blame for the Ukraine disaster as well.STOP US (+ its Anglospheric puppets), the worst threat to the world right now!

This monstrous rogue state $-freeloading U.S.:

1. Is adding more than 600 nukes to its already more than 6,000.

Because of smaller size, better transportability, higher accuracy,

and US first strike policy (unlike e.g. Russia, China etc.)

the risk of use of nukes by US has dramatically increased

- especially considering the end of $-hegemony because of China

outperforming it in tech and healthy development.

2. US can at any time read and silence your free speech, or stop your

transaction - wherever you are in the world (except in China).

3. UK/AUKUS bought US mini nukes (e.g. for Trident) and NATO+Finland bought

F35 which can carry them.

Peter Klevius wrote:

Wednesday, May 18, 2022

Shame on you Finland! Why risk yourself and others by defending a criminal* loser** like $-freeloader US (unless US causes a nuke war) against a certain winner like China?!

* 1971 USA robbed the world by cheating on its promise to keep the dollar connected to gold in exchange for the dollar being the world currency. In other words, all other currencies dropped compared to the dollar which now became a "schizophrenic" currency with an A-dollar in US and a B-dollar in all other countries. And although every country may print as much own currency they like, it comes with a cost it has to carry itself while the US dollar printing is paid for by the rest of the world. Only inflation is a risk for US - but not as much as outside US (except China).

** US has already lost its position as the largest economy to China, and is also fast losing in every high tech branch and science - while China is on its way up. This is why US so desperately tries to contain China and force other countries under US rule against China. However, ask youself which is better: Belt and road or nukes?

F-35A + B61-12 + NATO makes Finland a U.S. base for nuke threat/attacks against Russia - and ultimately China!

The Finns are now lured into NATO when the West is collapsing. A combination of US influence and a longlasting Finnish mongoloid complex that they weren't Western enough. Finnish Russophobia started with the grim Finnish civil war 2018 influenced by the US led Red Scare campaign which is the promotion of a widespread fear of a potential rise of communism, anarchism or other leftist ideologies by a society or state. It is often characterized as political propaganda. The term is most often used to refer to two periods in the history of the United States which are referred to by this name. The First Red Scare, which occurred immediately after World War I, revolved around a perceived threat from the American labor movement, anarchist revolution, and political radicalism. The Second Red Scare, which occurred immediately after World War II, was preoccupied with the perception that national or foreign communists were infiltrating or subverting U.S. society and the federal government.

North Atlantic Aliance is turning into US' South and East China Sea Sinophobia alliance.

How many Finns were aware of US true motives for pushing Finland into US militarism and nuke sphere for the ultimate aim to act as one tiny and helpless piece in US strategic and tactical containment of and attack on China? And how US uses its influence to corrupt politicians to betray their country and democracy.

Finland's young PM Sanna Marin rose to power thanks to US led/influenced World Economic Forum's "Young leaders" program.

A former Finnish PM Alexander Stubb celebrates that his long time wish for a Finnish NATO membership now comes true thanks to Russian invasion of Ukraine.

Peter Klevius wonders whether this was exactly what the US administartion also wanted - and even planned for.

Finns are thoroughly brainwashed with US narrative while completely locked out from alternative narratives.

It looks like US has been mostly interested in arms race and miltary, financial etc. evil meddling in Europe while Russia's main interest has been to sell cheap gas to Europe.

A Norwegian intelligence report points to three reasons why Russia feels threatened, making the country’s nuclear deterrence more important.

Firstly, Russia claims NATO has changed patterns from normal patrols and intelligence gatherings to simulated attacks on Russian targets, including with strategic bombers. Part of the Russian narrative is that NATO is coming closer to its borders.

Secondly, Moscow accuses NATO of introducing new areas of warfare, like the use of digital operations and militarization of the space, potentially being used to attack Russian ballistic missiles before launch.

Thirdly, Russia blames the United States for undermining the global security balance and arms control treaties, by that pushing the world towards a new nuclear arms race.

After all, US isn't interested in "defending" Europe. US' main and only goal is to (again) attack and eliminste China becaus US dollar scum is coming home to roost any day now because of China's succes. US managed to keep a stagnating Japan at bay with the help of EU in the 1990s. However, China is some ten times bigger and growing.

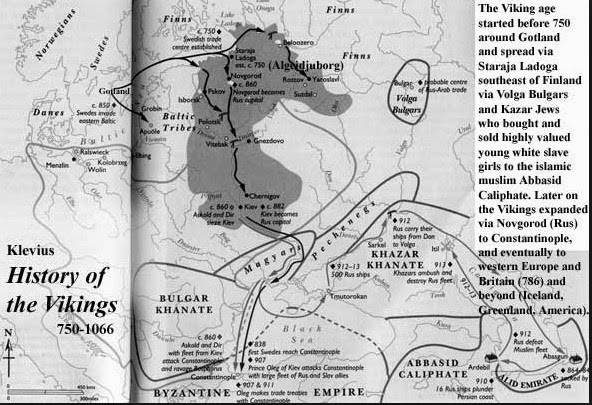

Chinese slave workers were brought to Finland from Russia some 100 years ago. They became part of the Finnish mongolid/russophobia racist complex.

Chinese slave workers brought to Finland via Russia.

Peter Klevius wrote 2003:

The Finnish/Swedish/European Mongoloid-complex

http://klevius.info/mongol.html?1076242540617

In 1952, only seven years after the end of Finland's disastrous connection with Germany in the World War 2, apart from having its first Olympics the nation celebrated the 17-year old Armi Kuusela's victory in the Miss Universe "beauty" contest, thus finally releasing the Finns from what was considered a traumatic connection with the East and its Russian/mongoloid inhabitants.

Interestingly enough it seems that the Finns express the most mongolic genetic profile of the Europeans. On the other hand especially eastern Finns tend to be more blond, more blue-eyed and to have the lightest skin color of all! Could that very fact be an effect of precisely that blend/traits from northern mongoloid people? I.e. that the most admired of "Nordic" features were inherited from the most unwanted direction? That the genetic blend happened to be especially sensitive to poor light conditions/folic acid intake etc., i.e. as an extra addition to the general impact of farming.

Also check out the Finnish "TAT"-controversy!

The Sami population inhabits an area (in Russia, Finland, Sweden and Norway) that is approximately the size of the entire Sweden (within Sweden about half the size of the country).

When the Swedish Prime Minister Göran Persson in 2004 held a conference on genocide, the only media that reported about the Sami protests against the decision not to include the native Swedes, seems to have been the Sami News.

Soft Genocide in Sweden (apart from the soft genocide executed by the Swedish legislator and the social authorities etc in matters of children).

Finland-Swedish Peter Klevius exhibits the art of the Finland-Swedish artist Hugo Simberg, calling it The Rape of Finland and Åland

Peter Klevius feels that Hugo Simberg would have 100% approved of this 2023 art exhibition on the web as a follow up to his 1903 exhibition of the 'Wounded Angel' at Ateneum (Helsingfors) - where Peter Klevius would have no access today

Peter Klevius opposite reading and correction of Greenland data compared to researchers biased with climate hysteria.

More than a century after Hugo Simberg painted the The Wounded Angel, it was voted Finland's "national painting" in 2006.

The Garden of Death by Hugo Simberg - updated by Peter Klevius.

The main problem for Finland (and other US puppets) is that US is now a dangerous losing criminal due to its dollar theft 1971- coming home to roost thanks to Cina's success, and that China surpasses US in all tech categories - which means that hanging on to US hegemony inevitably means retarding in technology, wealth, and security.

To understand the civil war in Ukraine you need to understand how US managed to reintroduce fascism in Europe, and how this has made it possible for US controlled media to support what it previously used to attack, and to attack what it previously used to support. What would normally be called neo-fascism, neo-nazism and genocide is now called "heroic", and liberation from state terror and oppression is now called crime of aggression.

A reaal genocide in Ukraine backed by US is dismissed while China's treatment of muslims is applauded by OIC (muslims' world organization) but declared "genocide" by US and its puppets!

The Neo-Nazi Question in Ukraine

The real problem is actually the administration's over-engagement in this case -- as in meddling in the affairs of another state and trying to rearrange its domestic political machinery to suit Washington's agenda.

By Michael Hughes, Contributor

Foreign Policy Analyst

Mar 11, 2014, 12:44 AM EDT

|

Updated May 11, 2014

This post was published on the now-closed HuffPost Contributor platform. Contributors control their own work and posted freely to our site. If you need to flag this entry as abusive, send us an email.

The Obama administration has vehemently denied charges that Ukraine's nascent regime is stock full of neo-fascists despite clear evidence suggesting otherwise. Such categorical repudiations lend credence to the notion the U.S. facilitated the anti-Russian cabal's rise to power as part of a broader strategy to draw Ukraine into the West's sphere of influence. Even more disturbing are apologists, from the American left and right, who seem willing accomplices in this obfuscation of reality, when just a cursory glance at the profiles of Ukraine's new leaders should give pause to the most zealous of Russophobes.

In a State Department "fact sheet" released last week the U.S. accused Putin of lying about the Ukrainian government being under the sway of extremist elements. The report stated that right wing ultranationalist groups "are not represented in the Rada (Ukraine's parliament)," and that "there is no indication the government would pursue discriminatory policies."

It isn't too surprising that conservative outlets like FOX News would downplay Russian allegations but the so-called "liberal" press has also contributed to the American disinformation campaign. Celestine Bohlen from The New York Times considers harsh epithets, like the word "neo-Nazi," which Putin has hurled at the demonstrators in Kiev as part of a Russian propaganda effort to tarnish Ukraine's revolutionary struggle against authoritarianism.

Yet after simply Googling the terms "Ukraine" and "Neo-Nazi," the official position of the United States government along with the stance taken by many in the American media both now seem quite dubious, if not downright ridiculous, especially considering that one would be hard-pressed to machinate the lineup that now dominates Ukraine's ministry posts.

For starters, Andriy Parubiy, the new secretary of Ukraine's security council, was a co-founder of the Neo-Nazi Social-National Party of Ukraine (SNPU), otherwise known as Svoboda. And his deputy, Dmytro Yarosh, is the leader of a party called the Right Sector which, according to historian Timothy Stanley, "flies the old flag of the Ukrainian Nazi collaborators at its rallies."

The highest-ranking right-wing extremist is Deputy Prime Minister Oleksandr Sych, also a member of Svoboda, who believes that women should "lead the kind of lifestyle to avoid the risk of rape, including refraining from drinking alcohol and being in controversial company." This is the philosophy underlying one of his "legal initiatives," according to the Kyiv Post, "to ban all abortions, even for pregnancies that occurred during rape."

The Svoboda party has tapped into Nazi symbolism including the "wolf's angel" rune, which resembles a swastika and was worn by members of the Waffen-SS, a panzer division that was declared a criminal organization at Nuremberg. A report from Tel-Aviv University describes the Svoboda party as "an extremist, right-wing, nationalist organization which emphasizes its identification with the ideology of German National Socialism."

According to this BBC news clip two Svoboda parliamentarians in recent weeks posed for photos while "brandishing well-known far right numerology," including the numbers 88 -- the eighth letter of the alphabet -- signifying "HH," as in "Heil Hitler." This all makes Hillary Clinton's recent comments comparing Putin to Hitler appear patently absurd, as Stanley adeptly points out: "After all, in the eyes of many ethnic Russians, it is the Ukrainian nationalists -- not Putin -- who are the Nazis."

Last week Per Anders Rudling from Lund University in Sweden, an expert on Ukrainian extremists, told Britain's Channel 4 News: "A neo-fascist party like Svoboda getting the deputy prime minister position is news in its own right." Well, except in the U.S.

Even more disconcerting has been the emergence of phone intercepts between high-ranking U.S. and Ukrainian officials which make it look as if the U.S. was basically, in the words of Princeton's Stephen Cohen, "plotting a coup d'état against the elected president of Ukraine." In other words, the U.S., in addition to providing moral support, may have paved the way for extremists to seize power in Kiev. Such a development would counter the American right's condemnation of Obama for not "engaging" in the world. The real problem is actually the administration's over-engagement in this case -- as in meddling in the affairs of another state and trying to rearrange its domestic political machinery to suit Washington's agenda.

This gambit has backfired in a number of ways. Not only has a neo-fascist-laden regime secured power in Kiev but it may have played the U.S. and its allies for fools by insinuating it would become part of the Western sphere when it really had no such designs. As Svoboda political council member Yury Noyevy baldly admitted: "The participation of Ukrainian nationalism and Svoboda in the process of EU [European Union] integration is a means to break our ties with Russia."

Be they radical mujahideen or neo-fascists, Washington certainly has a penchant for bolstering shadowy forces, usually labeling them with risible euphemisms like "freedom fighters," in order to satiate short-term geopolitical needs, despite said factions being inimical to America's true long-term interests.

It's not Russia that's pushed Ukraine to the brink of war

This article is more than 9 years old

Seumas Milne

The attempt to lever Kiev into the western camp by ousting an elected leader made conflict certain. It could be a threat to us all

Wed 30 Apr 2014 21.01 BST

The threat of war in Ukraine is growing. As the unelected government in Kiev declares itself unable to control the rebellion in the country's east, John Kerry brands Russia a rogue state. The US and the European Union step up sanctions against the Kremlin, accusing it of destabilising Ukraine. The White House is reported to be set on a new cold war policy with the aim of turning Russia into a "pariah state".

That might be more explicable if what is going on in eastern Ukraine now were not the mirror image of what took place in Kiev a couple of months ago. Then, it was armed protesters in Maidan Square seizing government buildings and demanding a change of government and constitution. US and European leaders championed the "masked militants" and denounced the elected government for its crackdown, just as they now back the unelected government's use of force against rebels occupying police stations and town halls in cities such as Slavyansk and Donetsk.

"America is with you," Senator John McCain told demonstrators then, standing shoulder to shoulder with the leader of the far-right Svoboda party as the US ambassador haggled with the state department over who would make up the new Ukrainian government.

When the Ukrainian president was replaced by a US-selected administration, in an entirely unconstitutional takeover, politicians such as William Hague brazenly misled parliament about the legality of what had taken place: the imposition of a pro-western government on Russia's most neuralgic and politically divided neighbour.

Putin bit back, taking a leaf out of the US street-protest playbook – even though, as in Kiev, the protests that spread from Crimea to eastern Ukraine evidently have mass support. But what had been a glorious cry for freedom in Kiev became infiltration and insatiable aggression in Sevastopol and Luhansk.

After Crimeans voted overwhelmingly to join Russia, the bulk of the western media abandoned any hint of even-handed coverage. So Putin is now routinely compared to Hitler, while the role of the fascistic right on the streets and in the new Ukrainian regime has been airbrushed out of most reporting as Putinist propaganda.

So you don't hear much about the Ukrainian government's veneration of wartime Nazi collaborators and pogromists, or the arson attacks on the homes and offices of elected communist leaders, or the integration of the extreme Right Sector into the national guard, while the anti-semitism and white supremacism of the government's ultra-nationalists is assiduously played down, and false identifications of Russian special forces are relayed as fact.

The reality is that, after two decades of eastward Nato expansion, this crisis was triggered by the west's attempt to pull Ukraine decisively into its orbit and defence structure, via an explicitly anti-Moscow EU association agreement. Its rejection led to the Maidan protests and the installation of an anti-Russian administration – rejected by half the country – that went on to sign the EU and International Monetary Fund agreements regardless.

No Russian government could have acquiesced in such a threat from territory that was at the heart of both Russia and the Soviet Union. Putin's absorption of Crimea and support for the rebellion in eastern Ukraine is clearly defensive, and the red line now drawn: the east of Ukraine, at least, is not going to be swallowed up by Nato or the EU.

But the dangers are also multiplying. Ukraine has shown itself to be barely a functioning state: the former government was unable to clear Maidan, and the western-backed regime is "helpless" against the protests in the Soviet-nostalgic industrial east. For all the talk about the paramilitary "green men" (who turn out to be overwhelmingly Ukrainian), the rebellion also has strong social and democratic demands: who would argue against a referendum on autonomy and elected governors?

Meanwhile, the US and its European allies impose sanctions and dictate terms to Russia and its proteges in Kiev, encouraging the military crackdown on protesters after visits from Joe Biden and the CIA director, John Brennan. But by what right is the US involved at all, incorporating under its strategic umbrella a state that has never been a member of Nato, and whose last elected government came to power on a platform of explicit neutrality? It has none, of course – which is why the Ukraine crisis is seen in such a different light across most of the world. There may be few global takers for Putin's oligarchic conservatism and nationalism, but Russia's counterweight to US imperial expansion is welcomed, from China to Brazil.

In fact, one outcome of the crisis is likely to be a closer alliance between China and Russia, as the US continues its anti-Chinese "pivot" to Asia. And despite growing violence, the cost in lives of Russia's arms-length involvement in Ukraine has so far been minimal compared with any significant western intervention you care to think of for decades.

The risk of civil war is nevertheless growing, and with it the chances of outside powers being drawn into the conflict. Barack Obama has already sent token forces to eastern Europe and is under pressure, both from Republicans and Nato hawks such as Poland, to send many more. Both US and British troops are due to take part in Nato military exercises in Ukraine this summer.

The US and EU have already overplayed their hand in Ukraine. Neither Russia nor the western powers may want to intervene directly, and the Ukrainian prime minister's conjuring up of a third world war presumably isn't authorised by his Washington sponsors. But a century after 1914, the risk of unintended consequences should be obvious enough – as the threat of a return of big-power conflict grows. Pressure for a negotiated end to the crisis is essential.

Zach Dorfman·National Security Correspondent

January 13, 2022·7 min read

The CIA is overseeing a secret intensive training program in the U.S. for elite Ukrainian special operations forces and other intelligence personnel, according to five former intelligence and national security officials familiar with the initiative. The program, which started in 2015, is based at an undisclosed facility in the Southern U.S., according to some of those officials.

The CIA-trained forces could soon play a critical role on Ukraine’s eastern border, where Russian troops have massed in what many fear is preparation for an invasion. The U.S. and Russia started security talks earlier this week in Geneva but have failed thus far to reach any concrete agreement.

Ukrainian Military Forces servicemen walk on a trench on the frontline with Russia-backed separatists near to Avdiivka, Donetsk, southeastern Ukraine.

Ukrainian troops walk in a trench on the frontline with Russia-backed separatists near Avdiivka, Donetsk, southeastern Ukraine, on Jan. 8. (Anatolii Stepanov/AFP via Getty Images)

While the covert program, run by paramilitaries working for the CIA’s Ground Branch — now officially known as Ground Department — was established by the Obama administration after Russia’s invasion and annexation of Crimea in 2014, and expanded under the Trump administration, the Biden administration has further augmented it, said a former senior intelligence official in touch with colleagues in government.

By 2015, as part of this expanded anti-Russia effort, CIA Ground Branch paramilitaries also started traveling to the front in eastern Ukraine to advise their counterparts there, according to a half-dozen former officials.

The multiweek, U.S.-based CIA program has included training in firearms, camouflage techniques, land navigation, tactics like “cover and move,” intelligence and other areas, according to former officials.

How to characterize the program is a matter of dispute. The U.S. over three presidents has debated whether to provide military assistance to Ukraine, and how much, with discussions often focusing on whether that help is offensive or defensive in character.

U.S. officials deny that the CIA training program is, or was ever, offensively oriented. “The purpose of the training, and the training that was delivered, was to assist in the collection of intelligence,” said a current senior intelligence official.

But just what intelligence support entails, in the paramilitary context, can be ambiguous. And how this training will be applied by the Ukrainians may change rapidly with facts on the ground.

Ukrainian Territorial Defense Forces, the military reserve of the Ukrainian Armes Forces, take part in a military exercise near Kiev on December 25, 2021. (Sergei Supinsky/AFP via Getty Images)

Ukrainian Territorial Defense Forces, the military reserve of the Ukrainian Armes Forces, take part in a military exercise near Kiev on December 25, 2021. (Sergei Supinsky/AFP via Getty Images)

The program has involved “very specific training on skills that would enhance” the Ukrainians’ “ability to push back against the Russians,” said the former senior intelligence official.

The training, which has included “tactical stuff,” is “going to start looking pretty offensive if Russians invade Ukraine,” said the former official.

One person familiar with the program put it more bluntly. “The United States is training an insurgency,” said a former CIA official, adding that the program has taught the Ukrainians how “to kill Russians.”

The program, which does not appear to have ever been formally aimed at preparing for an insurgency, did include training that could be used for that purpose. Another former agency official described technical aspects of the program, like showing Ukrainians how to maintain secure communications behind enemy lines or in a “hostile intelligence environment” as potential “stay-behind force training.”

The current senior intelligence official strongly denied that the program was designed in any way “to assist in an insurgency.”

“Suggestions that we have trained an armed insurgency in Ukraine are simply false,” said Tammy Thorp, a CIA spokesperson.

Going back decades, the CIA has provided limited training to Ukrainian intelligence units to try and shore up an independent Kyiv and prevent Russian subversion, but cooperation “ramped up” after the Crimea invasion, said a former CIA executive.

Militants of the self-proclaimed Luhansk People's Republic walk at a fighting position on the line of separation from the Ukrainian armed forces near the settlement of Frunze in Luhansk Region, Ukraine December 24, 2021. REUTERS/Alexander Ermochenko

Militants of the self-proclaimed Luhansk People's Republic walk at a fighting position on the line of separation from the Ukrainian armed forces near the settlement of Frunze in Luhansk Region, Ukraine Dec. 24, 2021. (Alexander Ermochenko/Reuters)

The CIA paramilitaries in Ukraine have “a very small footprint,” said the former agency executive, and are helping train Ukrainian forces in “potential critical nodes the Russians may focus on” if Moscow seeks to push farther into the country.

Though the agency’s paramilitary resources have been otherwise stretched thin in Afghanistan and on other counterterrorism missions, the U.S.-based training program has been a “high priority” for the CIA since its Obama-era inception, said the former senior intelligence official.

The program did not require, or receive, a new presidential finding, which is used to authorize covert action, and has been run under previously existing authorities, according to former officials.

The Trump administration — partially at the urging of Congress — later expanded funding for the initiative, increasing the number of Ukrainian cohorts brought over yearly to the U.S., according to former officials.

Training forces that could take part in an insurgency is not the same as actively supporting an insurgency if one takes place following a Russian invasion. The Biden administration has reportedly assembled a task force to determine how the CIA and other U.S. agencies could support a Ukrainian insurgency, should Russia launch a large-scale incursion.

“If the Russians invade, those [graduates of the CIA programs] are going to be your militia, your insurgent leaders,” said the former senior intelligence official. “We’ve been training these guys now for eight years. They’re really good fighters. That’s where the agency’s program could have a serious impact.”

Over the years, the CIA training programs have been “very effective,” said the former CIA executive.

It has helped “turn the tide,” said the first former CIA official, who said he or she was briefed that “gains were being made on the battlefield” as a “direct result” of the program.

Snipers in camouflage suits during The celebrations on the occasion of the 5th anniversary of the National Guard of Ukraine, Kyiv, Ukraine. (Maxym Marusenko/NurPhoto via Getty Images)

Snipers in camouflage suits during The celebrations on the occasion of the 5th anniversary of the National Guard of Ukraine, Kyiv, Ukraine. (Maxym Marusenko/NurPhoto via Getty Images)

Both U.S. and Ukrainian officials believe that Ukrainian forces will not be able to withstand a large-scale Russian incursion, according to former U.S. officials. But representatives from both countries also believe that Russia won’t be able to hold on to new territory indefinitely because of stiff resistance from Ukrainian insurgents, according to former officials.

Working so closely with the Ukrainians has presented unique challenges, according to former officials. For years, U.S. officials have believed that, because of Russia’s web of spies within Ukraine’s intelligence services, the program has very likely been compromised by Moscow.

Senior Trump administration officials discussed worries about Russian penetration of the program with their Ukrainian counterparts, according to a former national security official. The Ukrainians, well aware of the issue, have tried to vet the U.S.-bound trainees to weed out moles, according to former officials.

Still, Trump-era National Security Council officials established a rule not to tell the Ukrainians anything they weren’t comfortable with the Russians subsequently learning about, recalled the former national security official.

A small number of trainees in the earlier U.S.-based cohorts were sent back to Ukraine for breaking security rules, like possessing unauthorized electronic devices, according to the first former CIA official.

CIA officials also believed their trainees were being targeted by the Russians once they returned to Ukraine. “Russians and traitorous Russian loyalists within the Ukrainian security services were seeking out graduates of those classes to assassinate,” said the former CIA official.

Forensic police experts and military intelligence examine the wreckage of a car in Kyiv. The commander of Ukraine's military intelligence special ops unit colonel Maksym Shapoval was killed by a bomb attached to the bottom of his vehicle in central Kyiv. (Sergii Kharchenko/Pacific Press/LightRocket via Getty Images)

Forensic police experts and military intelligence examine the wreckage of a car in Kyiv. The commander of Ukraine's military intelligence special ops unit colonel Maksym Shapoval was killed by a bomb attached to the bottom of his vehicle in central Kyiv. (Sergii Kharchenko/Pacific Press/LightRocket via Getty Images)

Russian penetration of Ukrainian intelligence has been a long-standing problem for the CIA, according to former intelligence officials. For decades, the agency has tried to work only with special select Ukrainian units — some created at the agency’s insistence — that have been isolated from the rest of the country’s intelligence services in order to prevent Russian compromise, according to former officials.

Even though the CIA assumes some Russian compromise when working with the Ukrainians, the agency still believes the training program has been, on balance, highly valuable, according to former officials.

If the Russians launch a new invasion, “there’s going to be people who make their life miserable,” said the former senior intelligence official. The CIA-trained paramilitaries “will organize the resistance” using the specialized training they’ve received.

“All that stuff that happened to us in Afghanistan,” said the former senior intelligence official, “they can expect to see that in spades with these guys.”